Why I've Been Merging Microservices Back Into The Monolith At InVision

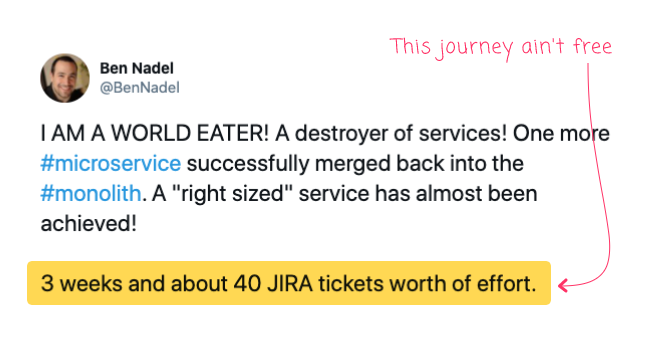

If you follow me on Twitter, you may notice that every now (1) and (2) then (3) I post a celebratory tweet about merging one of our microservices back into the monolith at InVision. My tweets are usually accompanied by a Thanos GIF in which Thanos is returning the last Infinity Stone to the Infinity Gauntlet. I find this GIF quite fitting as the reuniting of the stones gives Thanos immense power; much in the same way that reuniting the microservices give me and my team power. I've been asked several times as to why it is that I am killing-off my microservices. So, I wanted to share a bit more insight about this particular journey in the world of web application development.

I Am Not "Anti-Microservices"

To be very clear, I wanted to start this post off by stating unequivocally that I am not anti-microservices. My merging of services back into the monolith is not some crusade to get microservices out of my life. This quest is intended to "right size" the monolith. What I am doing is solving a pain-point for my team. If it weren't reducing friction, I wouldn't spend so much time (and opportunity cost) lifting, shifting, and refactoring old code.

Every time I do this, I run the risk of introducing new bugs and breaking the user experience. Merging microservices back into the monolith, while sometimes exhilarating, it always terrifying; and, represents a Master Class in planning, risk reduction, and testing. Again, if it weren't worth doing, I wouldn't be doing it.

Microservices Solve Both Technical and People Problems

In order to understand why I am destroying some microservices, it's important to understand why microservices get created in the first place. Microservices solve two types of problems: Technical problems and People problems.

A Technical problem is one in which an aspect of the application is putting an undue burden on the infrastructure; which, in turn, is likely causing a poor user experience (UX). For example, image processing requires a lot of CPU. If this CPU load becomes too great, it could start starving the rest of the application of processing resources. This could affect system latency. And, if it gets bad enough, it could start affecting system availability.

A People problem, on the other hand, has little to do with the application at all and everything to do with how your team is organized. The more people you have working in any given part of the application, the slower and more error-prone development and deployment becomes. For example, if you have 30 engineers all competing to "Continuously Deploy" (CD) the same service, you're going to get a lot of queuing; which means, a lot of engineers that could otherwise be shipping product are actually sitting around waiting for their turn to deploy.

Early InVision Microservices Mostly Solved "People" Problems

InVision has been a monolithic system since its onset 8-years ago when 3 engineers were working on it. As the company began to grow and gain traction, the number of systems barely increased while the size of the engineering team began to grow rapidly. In a few years, we had dozens of engineers - both back-end and front-end - all working on the same codebase and all deploying to the same service queue.

As I mentioned above, having a lot of people all working in the same place can become very problematic. Not only were the various teams all competing for the same deployment resources, it meant that every time an "Incident" was declared, several teams' code had to get rolled-back; and, no team could deploy while an incident was being managed. As you can imagine, this was causing a lot of friction across the organization, both for the engineering team and for the product team.

And so, "microservices" were born to solve the "People problem". A select group of engineers started drawing boundaries around parts of the application that they felt corresponded to team boundaries. This was done so that teams could work more independently, deploy independently, and ship more product. Early InVision microservices had almost nothing to do with solving technical problems.

Conway's Law Is Good If Your Boundaries Are Good

If you work with microservices, you've undoubtedly heard of "Conway's Law", introduced by Melvin Conway in 1967. It states:

Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization's communication structure.

This law is often illustrated with a "compiler" example:

If you have four groups working on a compiler, you'll get a 4-pass compiler.

The idea here being that the solution is "optimized" around team structures (and team communication overhead) and not necessarily designed to solve any particular technical or performance issues.

In the world before microservices, Conway's Law was generally discussed in a negative light. As in, Conway's Law represented poor planning and organization of your application. But, in a post-microservices world, Conway's Law is given much more latitude. Because, as it turns out, if you can break your system up into a set of independent services with cohesive boundaries, you can ship more product with fewer bugs because you've created teams that are much more focused working on set of services that entail a narrower set of responsibilities.

Of course, the benefits of Conway's Law depend heavily on where you draw boundaries; and, how those boundaries evolve over time. And this is where me and my team - the Rainbow Team - come into the picture.

Over the years, InVision has had to evolve from both an organizational and an infrastructure standpoint. What this means is that, under the hood, there is an older "legacy" platform and a growing "modern" platform. As more of our teams migrate to the "modern" platform, the services for which those teams were responsible need to get handed-off to the remaining "legacy" teams.

Today - in 2020 - my team is the legacy team. My team has slowly but steadily become responsible for more and more services. Which means: fewer people but more repositories, more programming languages, more databases, more monitoring dashboards, more error logs, and more late-night pages.

In short, all the benefits of Conway's Law for the organization have become liabilities over time for my "legacy" team. And so, we've been trying to "right size" our domain of responsibility, bringing balance back to Conway's Law. Or, in other words, we're trying to alter our service boundaries to match our team boundary. Which means, merging microservices back into the monolith.

Microservices Are Not "Micro", They Are "Right Sized"

Perhaps the worst thing that's ever happened to the microservices architecture is the term, "micro". Micro is a meaningless but heavily loaded term that's practically dripping with historical connotations and human bias. A far more helpful term would have been, "right sized". Microservices were never intended to be "small services", they were intended to be "right sized services."

"Micro" is apropos of nothing; it means nothing; it entails nothing. "Right sized", on the other hand, entails that a service has been appropriately designed to meet its requirements: it is responsible for the "right amount" of functionality. And, what's "right" is not a static notion - it is dependent on the team, its skill-set, the state of the organization, the calculated return-on-investment (ROI), the cost of ownership, and the moment in time in which that service is operating.

For my team, "right sized" means fewer repositories, fewer deployment queues, fewer languages, and fewer operational dashboards. For my rather small team, "right sized" is more about "People" than it is about "Technology". So, in the same way that InVision originally introduced microservices to solve "People problems", my team is now destroying those very same microservices in order to solve "People problems".

The gesture is the same, the manifestation is different.

I am extremely proud of my team and our efforts on the legacy platform. We are small band of warriors; but we accomplish quite a lot with what we have. I attribute this success to our deep knowledge of the legacy platform; our aggressive pragmatism; and, our continued efforts to design a system that speaks to our abilities rather than an attempt to expand our abilities to match our system demands. That might sound narrow-minded; but, it is the only approach that is tenable for our team and its resources in this moment in time.

Epilogue: Most Technology Doesn't Have to "Scale Independently"

One of the arguments in favor of creating independent services is the idea that those services can then "scale independently". Meaning, you can be more targeted in how you provision servers and databases to meet service demands. So, rather than creating massive services to scale only a portion of the functionality, you can leave some services small while independently scaling-up other services.

Of all the reasons as to why independent services are a "Good Thing", this one gets used very often but is, in my (very limited) opinion, usually irrelevant. Unless a piece of functionality is CPU bound or IO bound or Memory bound, independent scalability is probably not the "ility" you have to worry about. Much of the time, your servers are waiting for things to do; adding "more HTTP route handlers" to an application is not going to suddenly drain it of all of its resources.

If I could go back and redo our early microservice attempts, I would 100% start by focusing on all the "CPU bound" functionality first: image processing and resizing, thumbnail generation, PDF exporting, PDF importing, file versioning with rdiff, ZIP archive generation. I would have broken teams out along those boundaries, and have them create "pure" services that dealt with nothing but Inputs and Outputs (ie, no "integration databases", no "shared file systems") such that every other service could consume them while maintaining loose-coupling.

I'm not saying this would have solved all our problems - after all, we had more "people" problems than we did "technology" problems; but, it would have solved some more of the "right" problems, which may have made life a bit easier in the long-run.

Epilogue: Microservices Also Have a Dollars-And-Cents Cost

Service don't run in the abstract: they run on servers and talk to databases and report metrics and generate log entries. All of that has a very real dollars-and-cents cost. So while your "lambda function" doesn't cost you money when you're not using it, your "microservices" most certainly do. Especially when you consider the redundancy that you need to maintain in order to create a "highly available" system.

My team's merging of microservices back into the monolith has had an actual impact on the bottom-line of the business (in a good way). It's not massive - we're only talking about a few small services; but, it's not zero either. So, in additional to all of the "People" benefits we get from merging the systems together, we also get a dollars-and-cents benefit as well.

Reader Comments

Great read, and so spot on!

Too often we hear at conferences or read on the web that the "new way of developing is breaking up your monolith into microservices" without talking about the potential downsides we will have to deal with by doing so.

It's not about refactoring your code to keep ourselves busy, it's about the right tools and methods for the right job. You have captured this very elegantly in this post and also put some reality into it by sharing why you are doing what you are doing at InVision.

Thank you!

@Gerald,

Thank you for the kind words. It's always tough with the new shiny thing! Developers generally want to play with the cool new tools. And, given the fact that the average job in this industry is only 2-years, we don't all get the benefit of having to live with choices and see what impact they have over time. I count myself as quite lucky to be working on the same application for over 8-years now as it gives me a perspective that not everybody gets to have.

@ben This is a fantastic post and you've learned some great insights that can only come with real world experience!

Firstly, great application of Conway's Law. I was actually trying to remember the name of that law just a couple weeks back and I couldn't recall it to save my life. Your post instantly reminded me and it's a very true observation as well.

Secondly, I always dislike naming a team the "legacy" team. I think it's a bit demoralizing to those on it and implies that code is somehow not as good or useful to the org. If you're using best practices, source control, tests where possible, the CI/CD, consider calling it the "core team" or "foundation team" ;)

Lastly, you've make a great observation that there can be good value in keeping parts of the application together to reduce the footprint of repos, builds, and tests. Shameless ColdBox MVC plug incoming. This is where the power of a modular architecture comes in. It's great to break up a large app into a modular setup where you have a nice separation of M, V, and C into bite sized chunks with clear lines of responsibility while still living in the same repo and part of the same base app. The apps we build often times have 5-10 core modules where we break out functions to keep a nice organisation and always leave open the possibility to break some off into a separate app in the future, but without the overhead of completely separate microservices. I think this is a great "middle ground" as you've showed.

@Brad,

Thank you for the kind words. We actually joke on our team that we should be the legendary team, not the legacy one :D

I think part of our problem is that the internal boundaries within the monolith are not great. To you point, when you keep it really modular inside the service boundaries, it's easier for more teams to participate because they don't necessary have to understand "all the things" in order to get work done. I wouldn't say that we have a big ball of mud; but, we also don't have a really clean separation of concerns. I mean, "new code" is better than the old code; but, we do have loads of old code at this point.

Great insight Ben, thank you :)

@Tom,

My pleasure - I'm glad it landed well :)

Ben, nice read however the bit I dont understand is why should a 'legacy' team meam fewer people but taking on more services/infra etc. Surely the issue here is a budget/organizational one not a boundary one

What happens when your team scales back up as it should for the amount of responsibility your back to the old problem.

I personally experienced maintenance burden that comes with microservices. I was splitting monolith when both team and product were small. We didn't solve "People" or "Technical" problem at all - just exploring a buzzword. Maybe, if I could read Your post then, we could be spared hours of pointless work.

Thank You, great read.

@Konrad,

It's so hard! We like new things. I remember when I was first starting out and I learned a little bit about "Object Oriented Programming" (OOP). And then, without really any experience, I decided to do a new work project using a lot of OOP. Well, when all was said and down, the project timeline was like 3-times overdue! It was a pile of very confusing, very convoluted code. I had no idea what I was doing. Thankfully my boss at the time was very forgiving.

@Steve,

"Why does a smaller team take on more responsibilities?" -- my team asks ourselves that question every single day 🤣 I believe that was a bit more of an internal "political" question more than anything else. The company didn't want to "burden" all the newer teams with having to be distracted by the legacy code; so, slowly, they started giving more and more of it to us in order to free up the "future" of the product.

It's counter-intuitive, no doubt. But, such is life.

@Ben,

Your comment on your very first experience with OOPS reminds me of the Pilot System as discussed in Fred Brooks' book, The Mythical Man Month.

https://en.wikipedia.org/wiki/The_Mythical_Man-Month#The_pilot_system

It's a good reminder that whenever you learn something new, you'll basically hate your first go at it and want to re-do it. So, if you can, create a test implementation first that you expect to throw away. I certainly hated most of my first attempt to build OOP in CF :)

@Brad,

100% true! And, the saddest thing is, even now, about 15-years later, I still basically don't know much about real object-oriented programming. My use of "Objects" extends mostly to using "Singletons" (though not in the strict sense) to encapsulate glorified procedural scripts and split up some "Data access" components from "Business logic" components.

The closest I've ever come to feeling good about my OOP is when building some utility libraries that have swappable behaviors.

Hi Ben

I am the editor of InfoQ China(https://www.infoq.cn/) which focuses on software development. We like this article and plan to translate it into Chinese. Before we translate it into Chinese and publish it on our website, I want to ask for your permission first! This translation version is provided for informational purposes only, and will not be used for any commercial purpose. In exchange, we will put the English title and link at the end of Chinese article. If our readers want to read more about this, he/she can click back to your website.

Thanks a lot, hope to get your help. Any more question, please let me know.

Thanks,

Levin

@Levin,

Absolutely - I'm a huge fan of InfoQ - it would be an honor to be part of your series of articles. Thank you so much :D

Ben, great post. I especially like the concept of "right-sized" vs "micro". Right-sized is also imprecise, but carries a more directional connotation than "micro".

Mike

@Mike,

I'm glad you enjoyed the post :D

@Ben,

You are spot on there. This is one of the reasons why I think it is so important to hear from fellow developers and the lessons they have learned the hard way so that we don't keep repeating the same mistakes (unintentionally).

Thanks again for the great post and for taking the time to write it!

Cheers,

@Gerald,

My pleasure, good sir. Thanks for reading :D Learning lessons for others is so hard! I feel like we all have to be personally burned before we can truly understand things. The problem is, sometimes that cost is really high.

So Ben what stops your team becoming the dumping ground for things-that-should-have-been-refactored and all that other crufty stuff that exists?

I understand the organisation is optimising for speed ("teams on the modern platform getting rid of legacy"). Would I be right in guessing that those teams don't want to be "slowed down" maintaining and refactoring, "legacy code"?

PS: I love the article and the concept of right-sized micro-services, I'm just wondering if the modern teams are owning their own technical debt or just putting it into your legendary -graveyard-, oops, backlog.

francis

Thanks for sharing this, it's a good read.

Where I would not agree is where you talk about right-sized services. A microservice should be - IMHO - very encapsulating but also small. If not it's just a normal application or what you would call a right-sized service, no?

@Ben,

This is the Chinese link of this article: https://www.infoq.cn/article/o6kcqCSGBTmeTbOP4wG1

Thank you again for your help.

Levin

@Levin,

So cool! This is very exciting for me :D

@Antiphp,

It's an interesting question. And, I think perhaps this gets to the heart of the "intent" vs. the "implementation" of a microservice. For me, one the ways I think about a microservice is from a "Build vs. Buy" point-of-view. Meaning, every team has a service that they could build internally; or, one that they could choose to buy (as a third-party service). The decision usually (should) come down to whether or not the given service is a differentiator for the business itself.

Take, for example, managing WebSocket connections. At work, we use Pusher as our 3rd-party WebSocket provider since we're not really in the "business of managing WebSockets". The Pusher API is super simple (at least, the way we use it). It's basically one API end-point that allows us to push JSON payloads that then get pushed-out to all subscribed clients / channels.

Now, you could look at Pusher as a "microservice" from the consumer's point of view. It provides a very cohesive set of functionality with a very minimal API. You could imagine that if we were to build this type of service internally, it would be its own service.

However, under the hood, it's probably a massive infrastructure with loads of dependencies and complexities and scaling issues and data storage concerns and whatnot. And, it probably has its own set of microservices that we - as consumers - never have to know about.

Of course, did Pusher always operate at the same level of complexity? Probably not. I would hazard a guess that in the early days, when they had very few customers, they only had enough complexity to get the job done. Then, over time, as they needed to get more complex, and handle more scale, the internal implementation started to evolve.

However, from my (the consumer) point-of-view, nothing ever changed. I've still just been hitting that one API to push JSON to users. To me, the Pusher vendor has always been a "service" that I call because it manages the functionality that I need.

And, this is how I think about "right sized" services. There really is no "monolith" or "microservice"; there is simple a service that does "A Thing"; and, hopefully does it well.

@Francis,

What stops my team from becoming the dumping ground for old services?

Unfortunately, very little it seems 😭 . We've had to take over services that we know very little about. I think we even own one or two Golang services and literally no one on my team is proficient in Golang.

We've been able to push back on some attempts by basically throwing a tantrum that another team has the audacity to foist responsibility onto us. But, it's been hit-and-miss as to how much that works.

@All,

Me and some people just had a more in-depth discussion about this topic on the Working Code podcast:

www.bennadel.com/blog/3963-working-code-podcast-episode-005-monoliths-vs-microservices.htm

@Ben,

hi and thank you for your article! Just to let you know: there is a Russian translation of this article as well. You can find it here: https://habr.com/ru/company/flant/blog/540406/

@Dmitry,

Oh, that's awesome! Thank you :D

@All,

If anyone is curious, I just merged another Go service back into our ColdFusion monolith:

www.bennadel.com/blog/4020-a-peek-into-the-interstitial-cost-of-microservices.htm

This recent subsumption was very interesting (to me) because it was very small in scope: two Go routes which map directly onto two ColdFusion routes. As such, it was really easy to isolate and see the performance impact.

That said, I now wonder if the big change in performance relates to the overhead of the

CFHTTPcall that Brad Wood was recently talking about on the Modernize or Die podcast.Spot on. I am quite late here but this blog summarizes a lot of resources I read on the internet today on this topic. Explained all the stuff in great detail. The GIF of Thanos is quite impressive and relevant.

Thanks, Ben Nadel

@Pravin,

It's such a strange time. I see a lot of people walking back the idea of microservices. Even most of the big advocates are pushing that you should stick with a "modular monolith" until you really really need microservices.

But, at the same time, there's a cohort of people who, I feel, are doubling-down on the microservices philosophy, pushing more logic into Edge functions and breaking UIs up into "islands". It will be curious to see what the next few years look like.