The canonicalize() Function Will Decode Strings That "Loosely Match" HTML Entities In Lucee CFML 5.3.5.92

Yesterday, I started running into an interesting issue when using the canonicalize() function in Lucee CFML to normalize the encoding of a given URL. The result of the canonicalize() call was leaving some URL search-parameters intact while corrupting others. At first, it seemed completely random. But, after digging into it for a bit, I realized that the canonicalize() call was decoding substrings within the URL that looked like HTML entities. To demonstrate, I was able to isolate the issue in Lucee CFML 5.3.5.92.

According to the MDN (Mozilla Developer Network) Docs on HTML Entity, and Entity is:

An HTML entity is a piece of text ("string") that begins with an ampersand (

&) and ends with a semicolon (;) . Entities are frequently used to display reserved characters (which would otherwise be interpreted as HTML code), and invisible characters (like non-breaking spaces). You can also use them in place of other characters that are difficult to type with a standard keyboard.

So, strictly-speaking, an HTML Entity should end with a semi-colon. The problem is, the browser tries to be too helpful. And, in fact, will interpret HTML Entity values even if they aren't valid. For example, if my HTML were to contain the phrase:

How will » evaluate?

That » substring - which isn't a valid HTML Entity - won't render as a literal value; instead, the browser will render it as a right-angle quote.

Based on this, my assumption is that the canonicalize() call in Lucee CFML is taking this loose browser behavior into account when it decodes and normalizes strings. This is causing it to decode parts of a string that weren't encoded to begin with.

To see what I mean, let's take a look at the following ColdFusion code where I am constructing a URL that needs to be run through the canonicalize() function:

<cfscript>

// Setup the key-value pairs for our demo URL.

searchParams = [

"action=quantize",

"infinity=false",

"equivalence=fuzzy",

"origin=v7"

];

value = ( "end-point.htm?" & searchParams.toList( "&" ) );

echo( "Lucee " & server.lucee.version );

echo( "<br />" );

echo( "<br />" );

echo( encodeForHtml( canonicalize( value, true, true ) ) );

echo( "<br />" );

echo( "<br />" );

echo( encodeForHtml( value ) );

</cfscript>

ASIDE: Obviously, we wouldn't normally pass an internally-constructed URL to

canonicalize(). Just try to imagine that the URL-in-question is being passed-in from an untrusted source.

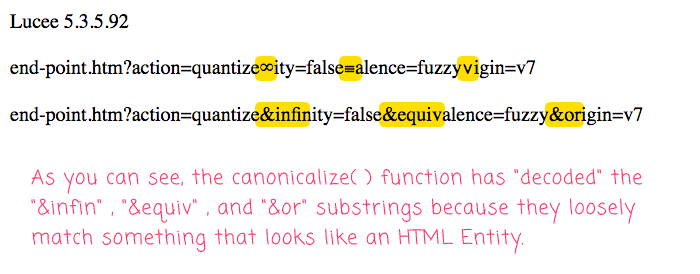

There should be nothing in the search-parameters of this URL that cause concern. However, when we run this Lucee CFML code, we get the following output:

As you can see, the canonicalize() function has "decoded" the following substrings:

&infin&equiv&or

Even though these substrings aren't strictly valid HTML Entities, in so much as they don't end with a semi-colon, the canonicalize() function is treating them as something that might get interpreted by the browser as HTML Entities. This leaves the normalized / sanitized / canonicalized URL as completely invalid.

So, what to do about this?

At this moment, I have no idea. But, I will be consulting with David Epler, our senior application security engineer, to figure out how to deal with case. And, I'll leave updates in the comments below.

It could just be that we're not using the canonicalize() function as it was intended to be used. To be honest, it's a function that confuses me a bit. I'm not quite sure when and where it's supposed to be applied.

Want to use code from this post? Check out the license.

Reader Comments

Since the point of the canonicalize() method is to try to sanitize string and make them safe from XSS exploits (which encodes normalizing multiple encoded strings), I would say this would be the expected behavior.

Since browser can interpret the strings as HTML entities, it makes sense canonicalize() would too.

I assume you're using the canonicalize() function on unsafe user input.

Is there a way you can parse out the URLs, replace them with a token holder, validate those URLs separately, canconicalize the string, then put the safe URLs back into the string?

If the input is only a URL, I would just try to validate the URL instead of using the canonicalize() function.

@Dan,

Yeah, basically we're trying to perform a "redirect" after a user logs-in. So, the "login page" receives the redirect URL as a parameter, which is then attempts to sanitize before consuming. So, yeah, we may just have to use a slightly more targeted approach to sanitizing the URL (rather than

canonicalize()).@Ben,

What you could do is parse the URL (using something like https://blog.pengoworks.com/index.cfm/2006/9/27/CFMX-UDF-Parsing-a-URI-into-a-struct), then sanitize the parts separately, then join them back to a URL.

@Dan,

Ha ha, that's funny -- I was just chatting with David Epler on Slack, and he was suggesting that we try something exactly along those lines :D I'm meeting with him later today to discuss in more depth.

@Daemach,

Yeah, that's the approach I'm currently exploring. I think it makes sense.

@Ben,

What happens when an encoded ampersand (

&) is legitimately used as part of the value?If

brand=A&Wis canonicalized, it will return:This is not desirable.

@James,

100%. And, I've actually already run into a situation like that with our existing approach (not including the ideas outlined above). We have a workflow where we have to redirect users to a deep-link in a native app, which has something like

sketch://in the URL. But, the sanitization process that we have in place now collapses the//into/, which breaks the redirect.I currently work-around that by base64-encoding the part of the URL.

The problem is, the redirect process is generic and open-ended; so, right now, we are erring on the side of caution; and then, addressing those issues as we encounter them.

@James,

Actually, if we can find a way to parse the URL on non-encoded

&, then we can canonicalize + encode each URI component after it is split. That could get us around your case.... I think.@All,

Here's my attempt to break a URL apart, canonicalize and encode the individual components, and then join it back together:

www.bennadel.com/blog/3832-canonicalizing-a-url-by-its-individual-components-in-lucee-cfml-5-3-6-61.htm

I am not sure if I really have to canonicalize the search string of the URL. I feel like most of the attacks are in the domain+path portion? But, I'm not really sure.