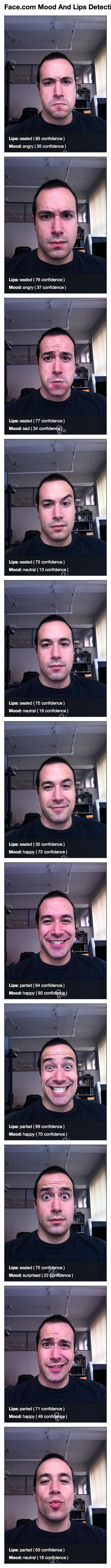

Trying Face.com's Mood And Lips Facial Detection Image API

The other day, I got an email from the Face.com team that they had recently augmented their facial detection API to include both Mood and Lip analysis. For those of you who don't remember, I recently used the Face.com API to select my Scotch on the Rocks (SOTR) Caffeine Raffle winner. Face.com makes it wicked easy to post a photo using CFHTTP and get all kinds of information about the faces contained within the photo, including position, eyes, ears, mouth, yaw, pitch, roll, etc.. With the new Mood and Lips attributes, I figured it would be a fun excuse to make some faces into the camera... in the name of computer science, of course!

When posting a photo to the Face.com API, you can include an "attributes" URL parameter. This value is a comma-delimited list of additional aspects you want analyzed. I believe that the list of possible attributes includes: gender, glasses, smiling, mood, lips, and face. For this experiment, we're only going to ask for "mood" and "lips."

I tried to take a bunch of photos with an array of different expressions. When the results are in, I simply output each photo to the screen with an overlay containing the given attributes (mood and lips) and the percentage confidence that the Face.com API has in its detection.

<!---

Give this page some extra timeout wiggle room since we'll be

uploading multiple images to the Face.com API.

--->

<cfsetting requesttimeout="#(60 * 2)#" />

<!---

Define the path to our images directory. We have 11 photos to

upload that will just be uploaded in numeric order.

--->

<cfset imageDirectory = expandPath( "./images/" ) />

<!--- ----------------------------------------------------- --->

<!--- ----------------------------------------------------- --->

<!---

Create an array to hold detection results. Face.com can only

accept one image at a time, so we'll have too loop over the

photos and post each individually, storing the results in turn.

--->

<cfset results = [] />

<!---

Post these photos to Face.com. Each photo will be analyzed

for faces; each face will be returned as a "Tag" with the

attribute information regarding mood and lips.

--->

<cfloop

index="index"

from="1"

to="11"

step="1">

<!---

Post the photo to the Face.com API.

NOTE: By using a ".json" as the target web service file

extension, we will be getting a JSON response.

--->

<cfhttp

result="detectionRequest"

method="post"

url="http://api.face.com/faces/detect.json">

<!--- Post credentials. --->

<cfhttpparam

type="url"

name="api_key"

value="#request.faceAPIKey#"

/>

<cfhttpparam

type="url"

name="api_secret"

value="#request.faceAPISecret#"

/>

<!---

We want to get mood and lips so add this as a

comma-delimited list of attributes. This will show

up in our "Attributes" property.

--->

<cfhttpparam

type="url"

name="attributes"

value="mood,lips"

/>

<!--- Post the file (as "filename"). --->

<cfhttpparam

type="file"

name="filename"

file="#imageDirectory##index#.jpg"

mimetype="images/jpeg"

/>

</cfhttp>

<!---

Deserialize the facial detection response and save the

data structure for evaluation.

--->

<cfset arrayAppend(

results,

deserializeJSON( detectionRequest.fileContent )

) />

</cfloop>

<!--- ----------------------------------------------------- --->

<!--- ----------------------------------------------------- --->

<!---

At this point, we have passed each image to the Face.com and

should have detection information. Now, let's output the faces

and the mood / lips properties.

--->

<cfcontent type="text/html" />

<cfoutput>

<!DOCTYPE html>

<html>

<head>

<title>Face.com Mood And Lips Detection</title>

<style type="text/css">

body {

font-family: "helvetica neue" ;

}

div.face {

border: 2px solid ##333333 ;

height: 640px ;

margin: 0px 0px 20px 0px ;

position: relative ;

width: 480px ;

}

div.face img {

display: block ;

height: 640px ;

width: 480px ;

}

div.face ul {

background-color: rgba( 25, 25, 25, .5 ) ;

border-top: 1px solid ##000000 ;

bottom: 0px ;

color: ##FFFFFF ;

font-size: 20px ;

left: 0px ;

list-style-type: none ;

margin: 0px 0px 0px 0px ;

padding: 10px 0px 10px 0px ;

position: absolute ;

width: 480px ;

}

div.face li {

padding: 5px 0px 5px 20px ;

}

</style>

</head>

<body>

<h1>

Face.com Mood And Lips Detection

</h1>

<!--- Output each face. --->

<cfloop

index="index"

from="1"

to="#arrayLen( results )#"

step="1">

<!---

Get teh reference to the attributes - our mood /

lips property.

--->

<cfset attributes = results[ index ]

.photos[ 1 ]

.tags[ 1 ]

.attributes

/>

<div class="face">

<img src="./images/#index#.jpg" />

<ul>

<li>

<strong>Lips:</strong>

#attributes.lips.value#

( #attributes.lips.confidence# confidence )

</li>

<li>

<strong>Mood:</strong>

#attributes.mood.value#

( #attributes.mood.confidence# confidence )

</li>

</ul>

</div>

</cfloop>

</body>

</html>

</cfoutput>

As you can see, the code is relatively simple. We post the photos, one at a time, to the Face.com API using CFHTTP. Then, we get back a JSON response. When we run the above code, we get a page with the following photos:

As you can see, it did a pretty decent job. It didn't separate out my "skeptical" face. Nor, did it find my "kissing" lips. But, overall, I'm pretty impressed with the results.

I've only just barely scratched the surface of the Face.com API. They can do all sorts of things like help you find specified people in photos and group photos with similar individuals. And now, they can even detect mood and lips. I don't have any immediate uses for this kind of stuff; but, I assume this would be unbelievably powerful for any piece of software that included photo management.

Want to use code from this post? Check out the license.

Reader Comments

I do hope no one caught you making faces in your web cam at work.

@Tim,

It's allll good - I'm always the first one here in the office (I love the peace and quiet of coming in early).

Pic #4 should be mood: Mr Spock, curious.

@Wil,

I would have also been more than happy to accept "The People's Eyebrow" from Face.com :D

Could this API be used to login people to an app via facial recognition? I was thinking about possibly integrating this into a flex mobile app.

Thanks - always appreciate your posts.

Does great re lips sealed/parted but for mood it has the emotional range of an eggplant. All of us can suggest much more accurate (and colorful) interpretations. LOVE your self-portraits; some are a hoot.

@Chris,

That's an awesome idea!! I think you probably could. One of the features that I believe Face.com has is the ability to build a face "catalog". Then, I think you can have it recognize faces from the catalog in the photos (to help you auto-tag / group photos I assume). What a cool idea!

@Nileen,

Thanks :) I was kind of surprised that it didn't get my kissing face. In some of the examples I saw on their site, it can definitely detect when lips are kissing.

After posting, I wondered if I was expecting too much from this software re moods. Good to hear that other examples did better. I admit I'm not sure what some of your test emotions should have been. But they all were lots of fun to see.

@Nileen,

If nothing else, it was an excuse to make some faces :)

That's interesting. It seems to have more confidence in your happiness than your sadness. :-) As for the kiss, maybe it was just looking for a different type of kiss. :-/

@Anna,

I'll try to practice my kissing - I'm probably just waaaay out of practice.

Dunno...if the face.com API can't detect "fishlips" it's usefulness in anything online dating related is pretty much nil.

lol @Ben...I am sure that's not true! Maybe you should just trying using the tongue. Maybe it will recognize that as a "kiss" haha

LOL - too funny. What about your, "Oh!" face (cue Office Space). Just kidding, but what would an open "O" mouth return?

Doesn't look like it gets too many emotions, but the technology is VERY interesting. If my webcam could see me when I hear, "Tell me what you want to do?" when I call my cable company, it would definitely come back, "Let me get you to a real human as fast as possible," with my irritation.

Come to think of it, I think the reason it does not recognize your kissy-face is because it is a logical program, even though it is seeming to simulate emotions. And, being a logical program, when it encounters your kissy-face, what it does is it goes through a condition. It measures the points at which the corners of your mouth are, 2 points at the top of your mouth, and then 2 points at the bottom of your mouth.

After that, what it does while considering the conditional is try to control for and decide whether or not you have big lips. Maybe the program decided you have big lips. And instead of registering a kissy-face of a small-lipped (or normal/averaged lipped person) making a kissy-face, it instead decided you were a big-lipped person making a regular face instead of a kissy-face. Imagine the headache that thing has when it is analyzing Angelina Jolie's face! That is probably why it is programmed to respond to kissy-lips as it does. If it didn't, it would probably ALWAYS register her face as kissy-lips. It's just trying not to descrimiate against big-lipped persons. :-)

(maybe they could add an entry from you as to whether you had big lips or little lips. Also, it would help probably if you were to enter gender, if it didn't already do that).

Just a guess.

Awesome, Anna :-D

@Randall - haha...just trying to find an example that is relevant to today that people actually care about. :-D

@Sharon,

Ha ha, good point :)

@Anna,

Dang it! I knew that was my problem - not enough tongue ;)

@Randall,

The technology is definitely very interesting. I'm trying to think of fun ways that I can use it.

@Anna,

I assume the program would just break for Jolie's face :P

This API is interesting.

By the way, 4th pic is kinda awesome. XD

@PPShein,

Thanks :) Trying to pull off the People's Eyebrow:

http://en.wikipedia.org/wiki/File:The_ROCK.jpg

... of course, you can't compete with the Rock.

Well, it could potentially be part of a password for sure. I could see this being useful for banking websites.

"Username,"

"Password,"

"What was the name of your best man at your wedding?"

"Smile for the camera."

And likewise, you could set up a secondary homepage for an under duress situation. So, you might frown normally, but smile for an "under duress" situation which would alert authorities that something is awry (think a bank teller who is trying not to appear that they are sending an alert because they have a gun to their head). The web page would show a similar website as normal, but with different logic (possibly show fake 404 errors).

There are Mac stories out there where people have taken snapshots of the crooks who stole their laptops, remotely, which later helped them recover and prosecute the thief. Thus, if the crook has a record, authorities have a photo. If the laptop takes a photo, they can connect the dots.

Patent this and give me half the money: a keyboard that takes fingerprints of the person who is using it. You're welcome, Steve Jobs.

I smell what The Ben is cooking!

@Randall,

Ha ha - thanks for getting the reference :)

ha ha...awesome!