Hal Helms On Object Oriented Programming - Day Five

This was the last day of Hal Helms' intensive 5 day course on object oriented programming - it's 11PM already and I just lost $40 playing Texas Holdem for the first time; needless to say, I'll keep this fairly short. I think the real breakthrough of the day involved persistence mechanisms. I'm not referring to any particular method of persistence, but rather to which part of the system is responsible for persisting the application data.

This isn't nearly as big a shocker as the "object integrity" bomb I dropped on you all yesterday, but I think this is fairly big in its own right:

An object should not know how to persist itself; persistence is not a valid behavior of the domain.

Again, take a moment to let that sink in. Just as it was difficult for me first accept that Object.Validate() was not proper object oriented programming, it was equally difficult for me to come to grips with the fact that the following code makes no sense:

Object.Save()

... or:

Service.Save( Object )

This can be difficult to swallow, so let's think about why persistence is important. Imagine a perfect world in which we had an infinite amount of computer RAM and that the computers on which our applications ran never ever crashed. Would persistence be necessary in such a world? You might want a way to serialize the entire application for transport, but there would never be a need to persist the data. When you think about this best-of-all-possible-worlds, you realize that persistence is a mechanism that we have only for performance reasons and to project against catastrophic failure (ie. if the machine crashes, we need a way to recreate the previous state of the system). As such, it becomes clear that persistence is not actually relevant to the domain in which we are working - persistence is simply not a valid behavior of our domain entities.

If persistence is not a behavior of the domain, then how do we guard ourselves against catastrophic failure? Well, if this is not a responsibility or our domain entities, then it must be a responsibility of the application. And, if you think about it, who else cares about data persistence other than the application? An "Attorney" object certainly has no concept of a server reboot - why would it care about persistence? The application - the glue that holds everything together and provides the over arching intent of the system - is the only thing that cares about data loss and therefore must be the only layer responsible for it.

Now, this doesn't mean that the Controller needs to know the implementation details of data persistence - data persistence and retrieval can still be done in our CRUD objects (Create, Read, Update, Delete); but, the Controller, and only the Controller, should know how to create and utilize these CRUD objects.

While there's not too much to this post, I think there's a great depth of content here. As I begin to understand what a domain model really is, and what it means for an application to live in a particular domain, I am beginning to think about my application architecture in a radically different way. The roles of the different tiers are becoming much more clear.

Hopefully tomorrow, I'll have time to provide an overall review of the course.

| |

|

|

||

| |

|

|

||

| |

|

|

Reader Comments

I'd say this is a matter of subjective opinion, as well as an issue of OO theoretical purity vs. pragmatism in reality. Yes, in an ideal world with unlimited RAM and power, we'd never need to worry about persistence. However, this is not an ideal world. And RAM and power will be issues for a very long time.

That said, I don't think many people would really say that object.save() or service.save(object) mean that the domain objects "know how to persist themselves". They simply have behavior that makes them useful. Would it sound better or more pure if I said object.removeFromMemory() or persistenceService.persist(object)?

As long as the behavior that handles this is isolated and keeps the domain objects as cohesive as possible, I don't really see a problem with this. Clearly *something* in the system has to know about the harsh reality that objects can't live in RAM forever. I would prefer to not have my controller coupled to this. I also don't want it in my domain objects. Which is why the service layer seems like a good place to oversee this by leveraging persistence-specific objects like Gateways that isolate that logic.

I don't consider a Gateway a domain object, nor do I consider a Service to be a domain object. So I may simply be agreeing with Hal in a roundabout way and the only difference is in how we're labeling things, but if I do userService.save(user), I don't see how that dilutes the purity of the domain object (the User) at all.

On one last note, what happens if the calling code is a web service or AMF request? This doesn't even talk to the controller (at least not the controller we typically refer to, such as Mach-II). Since the service objects are what expose the model to the world in an application and UI-neutral way, there isn't any other place for this knowledge TO be than in the service layer. Thoughts?

I have to agree with Brian on this one. This is something I have been struggling with in your posts today and yesterday. If you make your controller this fat, beast that is managing your validation, your persistence, your DI and everything else, then you completely lose the ability to use anything other than THAT controller to accomplish anything with your domain objects unless you are willing to completely rewrite your application if you want to put a Flex front-end on it or some other remote service.

That said, I have really been enjoying your posts this week. They have really helped me think a lot about the way I am doing things. While I may not agree completely, you have helped me examine my ideas about it.

Brian, the real point is that objects should not know how to save themselves. Of course, someone must and that's the point of a data persistence layer. But when to call that persistence layer is a responsibility of the controller, who alone knows when a "significant event" occurs that occasions a persitence of the object.

@Hal, I agree that the controller is the piece that knows when to persist the data of an application. But are you saying that the controller should be talking to the persistence layer directly?

My controller does know when to persist, but it calls for persistence thorugh the service layer...

service.save(object);

Then the service layer talks with the persistence layer.

Does this violate anything that you or Ben have been talking about? It is the controller that is "controlling" when persistence is called, but it is passing the work off to the service.

I know I read this somewhere and I think it says it very well. A service layer is a controller for the model while your other controller is really the controller for the UI. I could have this wrong but for me this makes sense.

I now have a consistent API to my model from my service layer. Now I won't speak for Hal, Brian, or others but to me it seems to want to support other types of UI and in reality just have more flexibility if something else comes down the road. It's another abstractions that has its pros and cons.

Ben, thanks for the series! It's been a great read. I didn't get a chance to finish up day 4 but I will this weekend. Hal, Brian and others thanks for chiming in since I'm sure I and everyone else are learning a whole lot!

I agree with Javier, this is the approach I have been taking.

Thanks for all these posts Ben. Food for thought.

I have been in the DomainObject.validate() and DomainObjectService.save(DomainObject) camp and although I'm open to the idea of moving validation up into the service layer I'm not up for moving the persist messages up to the controller.

I'd love to see a quick example app from Hal as I'm sure I'm misunderstanding him on this.

OO thinkers like Martin Fowler suggest the persistence gateways, logging, transaction management etc are called from the service layer too (lets ignore AOP for a moment!) and I would also suggest you read his Patterns Of Enterprise Application Architecture for another take on this.

Saying that I'd still love to attend one of Hal's courses!

Hey Hal, I'm curious to know what would happen if the call to the model is coming from a Flex application or a web service call. Are you saying you'd want to route this request to the same controller that is handling HTML requests? Or is what I am calling a service layer what you are calling a controller in a more general (non-UI-specific) sense?

@All,

I can't answer a lot of these questions really as I am very new to thinking in these terms. What I can say though is that the Controller is not becoming as fat as people might think it is. You still have all of the processing encapsulated behind various calls to the service layer. For example, we walked through an "Add New User" scenario in which each username had to be unique. Here are some thoughts we had on this:

1. A user has to have a username.

2. A user has to have a valid username which means that it has the allowable set of characters (maybe spaces are not valid).

3. A user has to have a unique username.

Now, 1 and 2 can be handled by the User object, but the user has no concept of it's username uniqueness. In fact, this might not even be a constraint for all applications that have users (maybe email is what needs to be unique in one app and username is just a personal preference). In that case, username uniqueness is a constraint of the given application; therefore, this check must be done in the app-specific portion of the system which is the Controller.

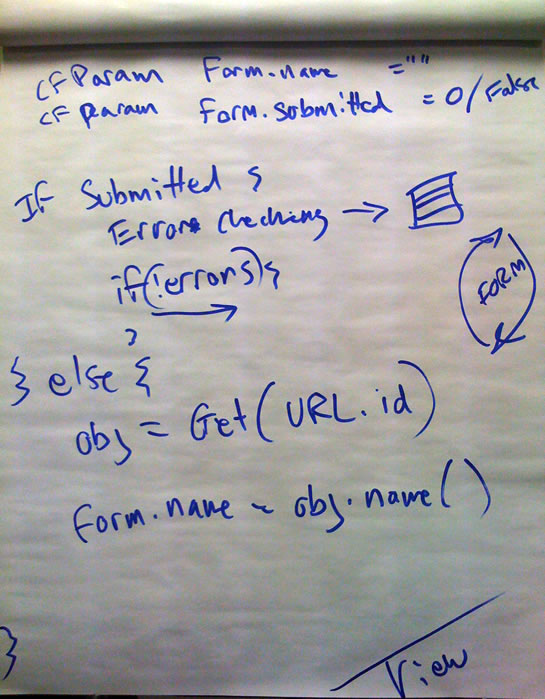

Pseudo code for the controller might look like this:

errors = NewUserForm.validate( FORM );

if (!error.size()){

. . . . user = UserService.CreateUser( FORM.username );

. . . . UserDAO.save( user )

. . . . location = "confirmation.cfm";

}

The Controller would really never get more "fat" than this. The form validation and the appropriate error messages would be in the validation object. The controller would simply call the parts that needed to get called.

And, when it comes to adding remote interfaces, there's not really that much different. You are still connecting to some sort of "Remote Controller" that would be able to handle all the same logic.

Now, when you get into things like caching mechanisms, I assume it would stay pretty much as is; except, you would decorate the UserService with whatever object was doing the caching.

The kicker here is that all of this is done with reusability in mind. If you are *not* planning on reusing your CFCs in different applications, then that's a completely different ball game. This is where I think we get the meeting of "OO Purity" and "OO Reality". In a pure frame of mind, we want to make these objects so pure that it will save us time on all future applications that need it. However, in reality, we might simply be more concerned with, "how can I get this project done faster NOW".

And, of course, my brain is still trying to absorb all of this :)