What Data Should I Push Over Realtime WebSockets?

At InVision, we use Pusher App to push realtime data over WebSockets from the server down to the client. Pusher, as a service, has been awesome! But, as our application has grown considerably, I'm finding a desire to change my realtime-push strategy. Right now, we push "data;" and, at first, that worked perfectly. As our UI has evolved, however, I'm thinking that we need to start pushing smaller, simpler "events" that may or may not trigger subsequent client-side requests.

When all the instances of a given "data element" within the User Interface (UI) look the same, it makes sense to push "data" over the WebSockets. Doing so allows all the relevant modules to seamlessly update their locally-cached data models without any additional communication. But, as the UI elements evolve in different directions, the data you push to the client may work well for one module but fall short for another.

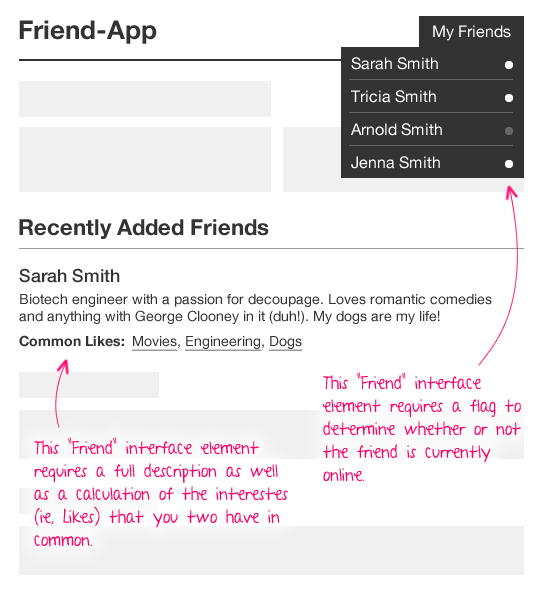

As an example, take a look at the following user interface (UI) for some imaginary social networking application. Notice that it has "friends" in the dropdown menu as well as in the primary page content:

| |

|

|

||

| |

|

|

||

| |

|

|

If I were to use WebSockets to push a message for "FriendAdded," what data would it need to contain? Well, if I wanted to update all client-side friend-lists seamlessly, it would have to contain enough data to cover the most complex friend-oriented user interface elements. So, in this case, the single push message would have to contain an online-status flag, a full friend description, and the "common likes" calculation. And, if any one of the UI element continues to grow in complexity, the WebSocket push will have to grow accordingly.

Essentially, pushing "data" couples your realtime push to all of the relevant UI elements.

A more flexible approach may be to push realtime "events" that consist of nothing more than an event-type and a very minimal set of identification data. So, going back to the Friend example above, the "FriendAdded" event might just push:

- eventType: "FriendAdded"

- id: 4

- name: "Sarah Smith"

The minimum-event-data would depend on the type of event. For example, if a friend was added to a "group," you'd probably want to include the group ID in the event:

- eventType: "FriendAddedToGroup"

- groupID: 81

- friendID: 4

- name: "Sarah Smith"

If this minimum-event-data can be consumed by a given user interface module, that's a bonus. If not, the UI module, such as "Recently Added Friends," may have to make a subsequent AJAX (Asynchronous JavaScript and XML) request back to the server to gather the fully relevant data payload. This makes the realtime communication a bit more "chatty;" but, it also decouples the realtime push from the user interface, and allows all the user interface modules to evolve independently.

I'm relatively new to WebSockets and to architecting applications that consume realtime events. So, maybe this idea is crazy; or, maybe it's really obvious and I'm just late to the party. In either case, I do feel "pain" in my current realtime push strategy and I do need to change something.

Reader Comments

No, Ben, you're not crazy, nor would you necessarily be late to the game. When you start dealing with applications that are being run on multiple computers, you start revisiting a lot of your practices. For me, this came when we started writing a computer application that expected to access the database on another computer.

You start comparing things.

* Will it be faster for me to get all the data in one shot or should I make multiple queries that get more specific data?

* How much data should I get for this table?

* Do I have the know-how and capability to get some of the results now and add to them later?

Many traditional programmers have written applications for a stand-alone machine where data moves almost instantaneously. When you have to consider bandwidth and, with web applications, browser capabilities, you've added a lot of complexity to the development process.

Generally, with web applications, if you can reliably expect your users to have browsers capable of running JavaScript, consuming web services, and conveying that information to the user in a meaningful way, you transmit everything on a need-to-know basis. This means little chunks of information so you can rapidly update snippets of information here and there on the page.

@Paul,

Also, I find that what makes sense at one point in the app doesn't make sense later on. The problem I am having now is that making the shift is not a simple task. Had I had a different mindset from the get-go, this would be easier.

@Ben,

20/20 hindsight, eh? This is something that's come up in the past: Our applications almost always reach a "maturity" which defies the mindset we had when we started. We can plan ahead as much as possible, but as the application matures we will almost always find a point where we have to (1) rewrite infrastructure or (2) apply band-aids and write kludges because we hadn't anticipated something.

Let that be a lesson to you. :P

@Ben,

You should check out http://www.meteor.com/. I love their logic and the way they handle data/websockets and interacting with the client/server.

I love pusher app however my greatest problem is their ability to handle bi-directional communication between server/client. So with my app, I run pusher app/websockets for general features but starting to build my own socket.io server to handle higher level bi-directional communication. I've been able to push data back and forth significantly faster than simple ajax and reduce the amount of data sent over the pipe that the client isn't using.

hopefully some day I will be fully running my own socket.io system without pusher app but that is a year+ away.

but my end game is to switch to meteor.com once they reach 1.0+ and support SQL databases.

I've thought about the exact same things, though it came to mind right away as a decision I needed to make.

I've never really liked the thought of a lot of back and forth via WebSockets. If you're running a single component, it looks really stupid to have a pull trigger a push trigger a pull trigger a push, etc. Why don't we just include the logic from the start in order to have a single pull and push?

But then you start having multiple components that use similar data and they each need different bits of info, so you want it all separated. In the end, decoupling for maintainability is probably the best idea, unless it becomes a noticeable performance issue due to the number of requests. At that point, you'll need to find some type of middle ground.

Using event style works great when you can deal with that at the client without further requests. A pushed event that requires another network request before it can be rendered to the end user is going to hurt your performance. The network overhead, latency and bandwidth, particularly on mobile networks, would make this costly to your end user.

The approach we typically take is that if we can compress it into one request, we do so (compress being the optimal term). If it's a really large dataset then we look at the users context for having access to that data; does it really need to be a real time push or are we wasting bandwidth on something they're never going to see/use? If we find it's a once in a while view, we de-couple that and use a subsequent request to pull that data while they see a spinner.

Real time data gets complicated fast. Most folks consider the server, but few think of the DOM. Depending on how many points within your existing page needs to be updated with that data, you have to start thinking of how to handle DOM updates (otherwise you'll have to start fighting jank).

I use websockets in a lot of apps; works great when you need bi-direction communication, but if you're just pushing data down to the client it's a bit overkill. Based on your description and keeping all things equal, I wouldn't use a websocket. I'd use a Server-Sent Event. Less to wire up on the JavaScript side, easier to implement on an existing server (can use an HTTP transport). My two cents.

@Jeremy,

I've heard lots of really good things about Meteor, but have not gone any farther than the screen-cast myself. That said, I believe that Pusher does allow for client-initiated events, if you want to publish user-to-user. If you want to push from client-to-server, I think you still have to use traditional AJAX requests.

I'll have to take a look at Meteor, see what everyone is talking about.

@Joe,

I wish I had though through it a bit more at the onset of the project; unfortunately, it was the kind of thing where we were already overdue from the moment the project started :( A little more team communication and collaboration would have helped as well.

Another nice thing about using the event-oriented approach is that you don't need to assemble data that you don't need. By that, I mean that if you have users that may/may-not be listening on a channel, you don't have to push data to users that aren't listening. You can just push events, and then any users that *are* listening, can make requests for further information.

I'm not explaining that well, sorry.

@Justin,

You bring up a really good point! When it comes to WebSockets, I have been trying to treat them as a "nice to have" and not as a critical aspect of the application. So far, I've been using the WebSockets to make the UI feel a bit more response; but, as you navigate around the app, the app still makes the traditional AJAX requests when needed.

For example, let's say I have list X. If a remote user adds an item to that list, the client will get a WebSocket push that said item was added; however, if the user goes away from the list and comes back to the list, we *still* make a request to the server to get the must up-to-date list X data.

So, the WebSockets simply update the UI faster; but, I am not depending on WebSockets. I guess this means that on less powerful devices (or ones where the WebSockets fail), you can turn it off, or at least expect that user to have a decent experience.

Ben, thanks for your post to Justin @ 4:33. It sort of answers the question I had about websockets in cf10. I've played with them a bit and learned you can't rely on them. Websockets seem to break, especially if there is any sort of disruption to the internet connection like you might get on a mobile device.

I was going to ask how you deal with the problem of websockets failing, all I've been able to do is display a last updated time and warn users they still might need to refresh to get the latest data.

@Ben I honestly think you have to find a mix of request types that work. WebSockets and SSE are a core part of a lot of apps that I've built lately, primary because I couldn't build said apps otherwise (the data would simply be too old to be useful to the end users). That said, I also have long poll fallbacks for when things aren't working out so hot (primarily when I can't get a stable connection, in which case I implement an offline ping check to one our endpoints). As you said, if all else fails things need to gracefully give you the best experience possible.

@me If your websockets are failing I'd look at your heartbeat. Depending on how your web socket server implementation is set up, it may be required to send from the connected client a ping that keeps the connection active. I'd also catch onclose and onerror and implement a auto-reconnection. If that auto-reconnect doesn't work, the fall back to long polling. If that doesn't work, then tell the user their offline.

Thanks Justin

@Justin, @Me,

Sorry, I didn't mean to imply that all WebSocket usage is a "nice to have." I was really limiting that to cases in which events can be leverage for a faster experience, but not necessarily for a critical use-case.

For example, if I was building a chat room, WebSockets would be a "must." Otherwise, you'd have to jump through too many hoops to get it work. Or, at the *very least*, you have to defer that kind of implementation-level fallback to the WebSocket library (meaning, a WebSocket library that may choose to fallback to long-polling in certain environments); but, that said, as far as you [the developer] is concerned, that decisions is encapsulated.

So, to sum up, sometimes WebSockets are kind of like "progressive enhancements." And, sometimes, they are a core part of the "platform" on which you are building your application. Sorry if I was making too-broad sweeping statements earlier.

@Ben, Thanks

Relevant articles include: Bi-direction communication with client-to-client messaging in Real-Time: www.bennadel.com/blog/2213-Sending-Client-To-Client-Realtime-Messages-With-The-PubNub-JavaScript-Library.htm

and a ColdFusion wrapper: www.bennadel.com/blog/2214-PubNub-cfc-A-ColdFusion-Wrapper-For-The-PubNub-Realtime-Messaging-Platform.htm

Sorry for my understanding. I am facing problem with web socket while transmitting image data over 50 client.CPU utilization goes to 100% and some client missed the image data. But I have solved my problem by using dynamic image link. In this case I am transmitting only image name to web-client and create a image link with particular name. Then CPU uses of Node process only 7 to 13 %.

Could you suggest me which one is good.

Transmit image data to every client or Transmit only name of image to every client and each client send back to server as http request as form of Image link.